Spark Interpreter for Apache Zeppelin

Overview

Apache Spark is a fast and general-purpose cluster computing system. It provides high-level APIs in Java, Scala, Python and R, and an optimized engine that supports general execution graphs. Apache Spark is supported in Zeppelin with Spark interpreter group which consists of below five interpreters.

| Name | Class | Description |

|---|---|---|

| %spark | SparkInterpreter | Creates a SparkContext and provides a Scala environment |

| %spark.pyspark | PySparkInterpreter | Provides a Python environment |

| %spark.r | SparkRInterpreter | Provides an R environment with SparkR support |

| %spark.sql | SparkSQLInterpreter | Provides a SQL environment |

| %spark.dep | DepInterpreter | Dependency loader |

Configuration

The Spark interpreter can be configured with properties provided by Zeppelin. You can also set other Spark properties which are not listed in the table. For a list of additional properties, refer to Spark Available Properties.

| Property | Default | Description |

|---|---|---|

| args | Spark commandline args | master | local[*] | Spark master uri. ex) spark://masterhost:7077 |

| spark.app.name | Zeppelin | The name of spark application. |

| spark.cores.max | Total number of cores to use. Empty value uses all available core. |

|

| spark.executor.memory | 1g | Executor memory per worker instance. ex) 512m, 32g |

| zeppelin.dep.additionalRemoteRepository | spark-packages, http://dl.bintray.com/spark-packages/maven, false; |

A list of id,remote-repository-URL,is-snapshot; for each remote repository. |

| zeppelin.dep.localrepo | local-repo | Local repository for dependency loader |

| zeppelin.pyspark.python | python | Python command to run pyspark with |

| zeppelin.spark.concurrentSQL | false | Execute multiple SQL concurrently if set true. |

| zeppelin.spark.maxResult | 1000 | Max number of Spark SQL result to display. |

| zeppelin.spark.printREPLOutput | true | Print REPL output |

| zeppelin.spark.useHiveContext | true | Use HiveContext instead of SQLContext if it is true. |

| zeppelin.spark.importImplicit | true | Import implicits, UDF collection, and sql if set true. |

Without any configuration, Spark interpreter works out of box in local mode. But if you want to connect to your Spark cluster, you'll need to follow below two simple steps.

1. Export SPARK_HOME

In conf/zeppelin-env.sh, export SPARK_HOME environment variable with your Spark installation path.

For example,

export SPARK_HOME=/usr/lib/spark

You can optionally set more environment variables

# set hadoop conf dir

export HADOOP_CONF_DIR=/usr/lib/hadoop

# set options to pass spark-submit command

export SPARK_SUBMIT_OPTIONS="--packages com.databricks:spark-csv_2.10:1.2.0"

# extra classpath. e.g. set classpath for hive-site.xml

export ZEPPELIN_INTP_CLASSPATH_OVERRIDES=/etc/hive/conf

For Windows, ensure you have winutils.exe in %HADOOP_HOME%\bin. Please see Problems running Hadoop on Windows for the details.

2. Set master in Interpreter menu

After start Zeppelin, go to Interpreter menu and edit master property in your Spark interpreter setting. The value may vary depending on your Spark cluster deployment type.

For example,

- local[*] in local mode

- spark://master:7077 in standalone cluster

- yarn-client in Yarn client mode

- mesos://host:5050 in Mesos cluster

That's it. Zeppelin will work with any version of Spark and any deployment type without rebuilding Zeppelin in this way. For the further information about Spark & Zeppelin version compatibility, please refer to "Available Interpreters" section in Zeppelin download page.

Note that without exporting

SPARK_HOME, it's running in local mode with included version of Spark. The included version may vary depending on the build profile.

SparkContext, SQLContext, SparkSession, ZeppelinContext

SparkContext, SQLContext and ZeppelinContext are automatically created and exposed as variable names sc, sqlContext and z, respectively, in Scala, Python and R environments.

Staring from 0.6.1 SparkSession is available as variable spark when you are using Spark 2.x.

Note that Scala/Python/R environment shares the same SparkContext, SQLContext and ZeppelinContext instance.

Dependency Management

There are two ways to load external libraries in Spark interpreter. First is using interpreter setting menu and second is loading Spark properties.

1. Setting Dependencies via Interpreter Setting

Please see Dependency Management for the details.

2. Loading Spark Properties

Once SPARK_HOME is set in conf/zeppelin-env.sh, Zeppelin uses spark-submit as spark interpreter runner. spark-submit supports two ways to load configurations.

The first is command line options such as --master and Zeppelin can pass these options to spark-submit by exporting SPARK_SUBMIT_OPTIONS in conf/zeppelin-env.sh. Second is reading configuration options from SPARK_HOME/conf/spark-defaults.conf. Spark properties that user can set to distribute libraries are:

| spark-defaults.conf | SPARK_SUBMIT_OPTIONS | Description |

|---|---|---|

| spark.jars | --jars | Comma-separated list of local jars to include on the driver and executor classpaths. |

| spark.jars.packages | --packages | Comma-separated list of maven coordinates of jars to include on the driver and executor classpaths. Will search the local maven repo, then maven central and any additional remote repositories given by --repositories. The format for the coordinates should be groupId:artifactId:version. |

| spark.files | --files | Comma-separated list of files to be placed in the working directory of each executor. |

Here are few examples:

SPARK_SUBMIT_OPTIONSinconf/zeppelin-env.shexport SPARK_SUBMIT_OPTIONS="--packages com.databricks:spark-csv_2.10:1.2.0 --jars /path/mylib1.jar,/path/mylib2.jar --files /path/mylib1.py,/path/mylib2.zip,/path/mylib3.egg"SPARK_HOME/conf/spark-defaults.confspark.jars /path/mylib1.jar,/path/mylib2.jar spark.jars.packages com.databricks:spark-csv_2.10:1.2.0 spark.files /path/mylib1.py,/path/mylib2.egg,/path/mylib3.zip

3. Dynamic Dependency Loading via %spark.dep interpreter

Note:

%spark.depinterpreter loads libraries to%sparkand%spark.pysparkbut not to%spark.sqlinterpreter. So we recommend you to use the first option instead.

When your code requires external library, instead of doing download/copy/restart Zeppelin, you can easily do following jobs using %spark.dep interpreter.

- Load libraries recursively from maven repository

- Load libraries from local filesystem

- Add additional maven repository

- Automatically add libraries to SparkCluster (You can turn off)

Dep interpreter leverages Scala environment. So you can write any Scala code here.

Note that %spark.dep interpreter should be used before %spark, %spark.pyspark, %spark.sql.

Here's usages.

%spark.dep

z.reset() // clean up previously added artifact and repository

// add maven repository

z.addRepo("RepoName").url("RepoURL")

// add maven snapshot repository

z.addRepo("RepoName").url("RepoURL").snapshot()

// add credentials for private maven repository

z.addRepo("RepoName").url("RepoURL").username("username").password("password")

// add artifact from filesystem

z.load("/path/to.jar")

// add artifact from maven repository, with no dependency

z.load("groupId:artifactId:version").excludeAll()

// add artifact recursively

z.load("groupId:artifactId:version")

// add artifact recursively except comma separated GroupID:ArtifactId list

z.load("groupId:artifactId:version").exclude("groupId:artifactId,groupId:artifactId, ...")

// exclude with pattern

z.load("groupId:artifactId:version").exclude(*)

z.load("groupId:artifactId:version").exclude("groupId:artifactId:*")

z.load("groupId:artifactId:version").exclude("groupId:*")

// local() skips adding artifact to spark clusters (skipping sc.addJar())

z.load("groupId:artifactId:version").local()

ZeppelinContext

Zeppelin automatically injects ZeppelinContext as variable z in your Scala/Python environment. ZeppelinContext provides some additional functions and utilities.

Object Exchange

ZeppelinContext extends map and it's shared between Scala and Python environment.

So you can put some objects from Scala and read it from Python, vice versa.

// Put object from scala

%spark

val myObject = ...

z.put("objName", myObject)

// Exchanging data frames

myScalaDataFrame = ...

z.put("myScalaDataFrame", myScalaDataFrame)

val myPythonDataFrame = z.get("myPythonDataFrame").asInstanceOf[DataFrame]

# Get object from python

%spark.pyspark

myObject = z.get("objName")

# Exchanging data frames

myPythonDataFrame = ...

z.put("myPythonDataFrame", postsDf._jdf)

myScalaDataFrame = DataFrame(z.get("myScalaDataFrame"), sqlContext)

Form Creation

ZeppelinContext provides functions for creating forms.

In Scala and Python environments, you can create forms programmatically.

%spark

/* Create text input form */

z.input("formName")

/* Create text input form with default value */

z.input("formName", "defaultValue")

/* Create select form */

z.select("formName", Seq(("option1", "option1DisplayName"),

("option2", "option2DisplayName")))

/* Create select form with default value*/

z.select("formName", "option1", Seq(("option1", "option1DisplayName"),

("option2", "option2DisplayName")))

%spark.pyspark

# Create text input form

z.input("formName")

# Create text input form with default value

z.input("formName", "defaultValue")

# Create select form

z.select("formName", [("option1", "option1DisplayName"),

("option2", "option2DisplayName")])

# Create select form with default value

z.select("formName", [("option1", "option1DisplayName"),

("option2", "option2DisplayName")], "option1")

In sql environment, you can create form in simple template.

%spark.sql

select * from ${table=defaultTableName} where text like '%${search}%'

To learn more about dynamic form, checkout Dynamic Form.

Matplotlib Integration (pyspark)

Both the python and pyspark interpreters have built-in support for inline visualization using matplotlib, a popular plotting library for python. More details can be found in the python interpreter documentation, since matplotlib support is identical. More advanced interactive plotting can be done with pyspark through utilizing Zeppelin's built-in Angular Display System, as shown below:

Interpreter setting option

You can choose one of shared, scoped and isolated options wheh you configure Spark interpreter. Spark interpreter creates separated Scala compiler per each notebook but share a single SparkContext in scoped mode (experimental). It creates separated SparkContext per each notebook in isolated mode.

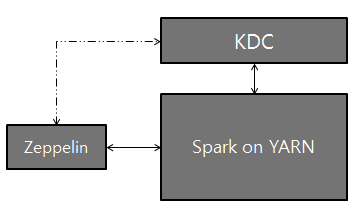

Setting up Zeppelin with Kerberos

Logical setup with Zeppelin, Kerberos Key Distribution Center (KDC), and Spark on YARN:

Configuration Setup

On the server that Zeppelin is installed, install Kerberos client modules and configuration, krb5.conf. This is to make the server communicate with KDC.

Set

SPARK_HOMEin[ZEPPELIN_HOME]/conf/zeppelin-env.shto use spark-submit (Additionally, you might have to setexport HADOOP_CONF_DIR=/etc/hadoop/conf)Add the two properties below to Spark configuration (

[SPARK_HOME]/conf/spark-defaults.conf):spark.yarn.principal spark.yarn.keytabNOTE: If you do not have permission to access for the above spark-defaults.conf file, optionally, you can add the above lines to the Spark Interpreter setting through the Interpreter tab in the Zeppelin UI.

That's it. Play with Zeppelin!